本次学习刚好借助学校的pron机会学习下,OAuth发展和原理。

OAuth授权框架演进研究:从OAuth 1.0到PKCE的安全增强机制

Research on OAuth Authorization Framework Evolution: Security Enhancement Mechanisms from OAuth 1.0 to PKCE

3. 技术概述 (Technology Overview)

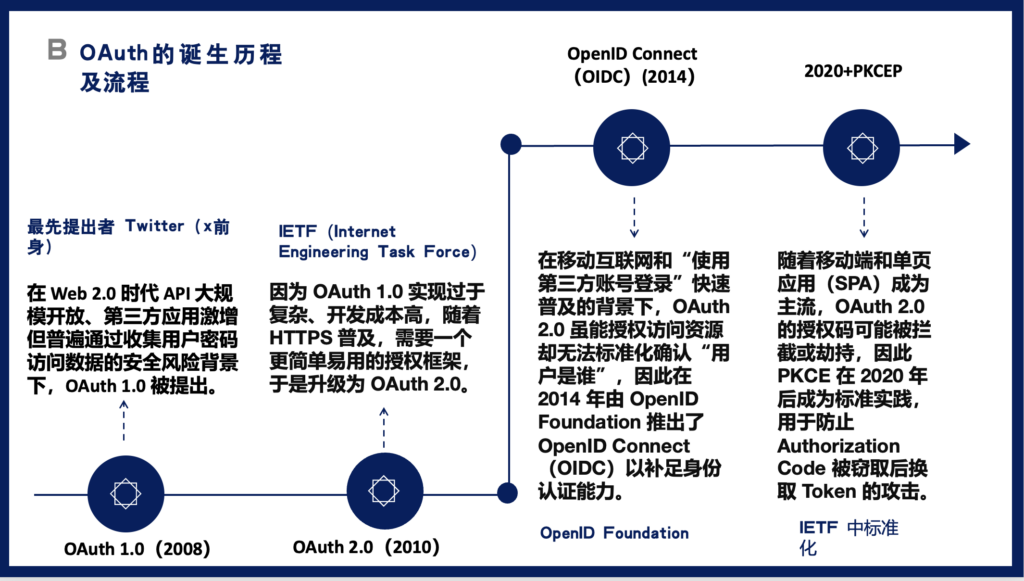

OAuth授权框架的演进历程反映了互联网安全架构从简单密码共享到复杂密码学保护的范式转变。这一演进路径始于2008年的OAuth 1.0,经历了2010年OAuth 2.0的简化革新,2014年OpenID Connect (OIDC)的身份层扩展,最终在2020年后通过Proof Key for Code Exchange (PKCE)实现了对移动和单页应用的全面安全保护。每一次技术迭代都是对特定时期安全威胁和应用需求的响应,共同构建了一个层次化、模块化的现代授权生态系统。OAuth 1.0通过基于签名的授权机制解决了密码共享问题,但其复杂的实现阻碍了广泛采用;OAuth 2.0简化了协议流程并依赖HTTPS提供传输安全,极大地促进了API经济的发展;OIDC在OAuth 2.0基础上增加了标准化的身份认证层,实现了”使用第三方账号登录”的普及;PKCE则针对公共客户端的授权码拦截威胁提供了密码学保护,完善了整个框架的安全闭环。这四个技术节点不仅代表了协议的演进,更体现了互联网安全理念从”信任但验证”到”零信任架构”的根本转变,其核心安全目标涵盖了授权(Authorization)、认证(Authentication)、会话管理(Session Management)和隐私保护(Privacy Protection)四个关键维度。

The evolution of the OAuth authorization framework reflects a paradigm shift in Internet security architecture from simple password sharing to sophisticated cryptographic protection. This evolutionary path began with OAuth 1.0 in 2008, underwent simplification with OAuth 2.0 in 2010, expanded with the identity layer of OpenID Connect (OIDC) in 2014, and ultimately achieved comprehensive security protection for mobile and single-page applications through Proof Key for Code Exchange (PKCE) after 2020. Each technological iteration responds to specific security threats and application requirements of its era, collectively building a hierarchical and modular modern authorization ecosystem. OAuth 1.0 solved the password sharing problem through signature-based authorization mechanisms, but its complex implementation hindered widespread adoption; OAuth 2.0 simplified the protocol flow and relied on HTTPS for transport security, greatly facilitating the development of the API economy; OIDC added a standardized identity authentication layer on top of OAuth 2.0, enabling the proliferation of “login with third-party accounts”; PKCE provided cryptographic protection against authorization code interception threats for public clients, completing the security loop of the entire framework. These four technological milestones represent not only protocol evolution but also embody the fundamental transformation of Internet security philosophy from “trust but verify” to “zero trust architecture,” with core security objectives covering four key dimensions: Authorization, Authentication, Session Management, and Privacy Protection.

4. 起源、动机与演进时间线 (Origin, Motivation, and Evolution Timeline)

背景与动机 (Background and Motivation)

OAuth框架的诞生源于Web 2.0时代API经济爆发式增长带来的安全挑战。在2006-2007年间,随着Twitter、Flickr等社交平台的兴起,第三方应用需要访问用户在这些平台上的数据成为普遍需求。然而,当时的解决方案极其原始且危险:用户需要将自己的用户名和密码直接提供给第三方应用,这种做法不仅违反了最小权限原则,还存在巨大的安全风险——第三方应用可以完全控制用户账户,密码泄露风险呈指数级增长,且用户无法选择性地撤销权限。2007年,Twitter的工程师Blaine Cook首先意识到这个问题的严重性,他与Chris Messina、Larry Halff等人开始探讨一种不需要共享密码就能授权第三方访问的机制。这个讨论很快扩展到了包括Google、Yahoo、AOL在内的主要互联网公司,最终形成了OAuth 1.0的核心设计理念:通过令牌(Token)而非密码来实现授权,通过数字签名来保证请求的完整性和真实性。

The birth of the OAuth framework originated from the security challenges brought about by the explosive growth of the API economy in the Web 2.0 era. During 2006-2007, with the rise of social platforms like Twitter and Flickr, third-party applications’ need to access users’ data on these platforms became commonplace. However, the solution at that time was extremely primitive and dangerous: users had to provide their usernames and passwords directly to third-party applications. This practice not only violated the principle of least privilege but also posed enormous security risks—third-party applications could fully control user accounts, password leakage risks grew exponentially, and users could not selectively revoke permissions. In 2007, Twitter engineer Blaine Cook first recognized the severity of this problem and began discussing with Chris Messina, Larry Halff, and others a mechanism for authorizing third-party access without sharing passwords. This discussion quickly expanded to major Internet companies including Google, Yahoo, and AOL, ultimately forming the core design philosophy of OAuth 1.0: achieving authorization through tokens rather than passwords, and ensuring request integrity and authenticity through digital signatures.

OAuth 2.0的出现是对OAuth 1.0实践经验的深刻反思。尽管OAuth 1.0在理论上提供了强大的安全保证,但其在实际部署中暴露出严重的可用性问题。开发者需要实现复杂的签名算法(HMAC-SHA1或RSA-SHA1),每个API调用都需要计算签名,这不仅增加了客户端的实现复杂度,也带来了显著的性能开销。更重要的是,随着2010年前后HTTPS的普及和成本下降,传输层安全已经可以提供足够的保护,使得应用层的签名机制显得冗余。Facebook的早期实践证明,一个更简单的基于Bearer Token的机制在HTTPS保护下可以达到相似的安全级别,同时大幅降低开发门槛。因此,OAuth 2.0的设计目标从”完美安全”转向”实用安全”,通过模块化设计支持多种授权流程(Authorization Code、Implicit、Client Credentials、Resource Owner Password),以适应不同场景的需求。

The emergence of OAuth 2.0 was a profound reflection on the practical experience of OAuth 1.0. Although OAuth 1.0 theoretically provided strong security guarantees, it exposed serious usability issues in actual deployment. Developers needed to implement complex signature algorithms (HMAC-SHA1 or RSA-SHA1), and every API call required signature calculation, which not only increased client implementation complexity but also brought significant performance overhead. More importantly, with the widespread adoption and cost reduction of HTTPS around 2010, transport layer security could already provide sufficient protection, making application-layer signature mechanisms seem redundant. Facebook’s early practices proved that a simpler Bearer Token-based mechanism under HTTPS protection could achieve similar security levels while significantly lowering the development threshold. Therefore, OAuth 2.0’s design goal shifted from “perfect security” to “practical security,” supporting multiple authorization flows (Authorization Code, Implicit, Client Credentials, Resource Owner Password) through modular design to meet the needs of different scenarios.

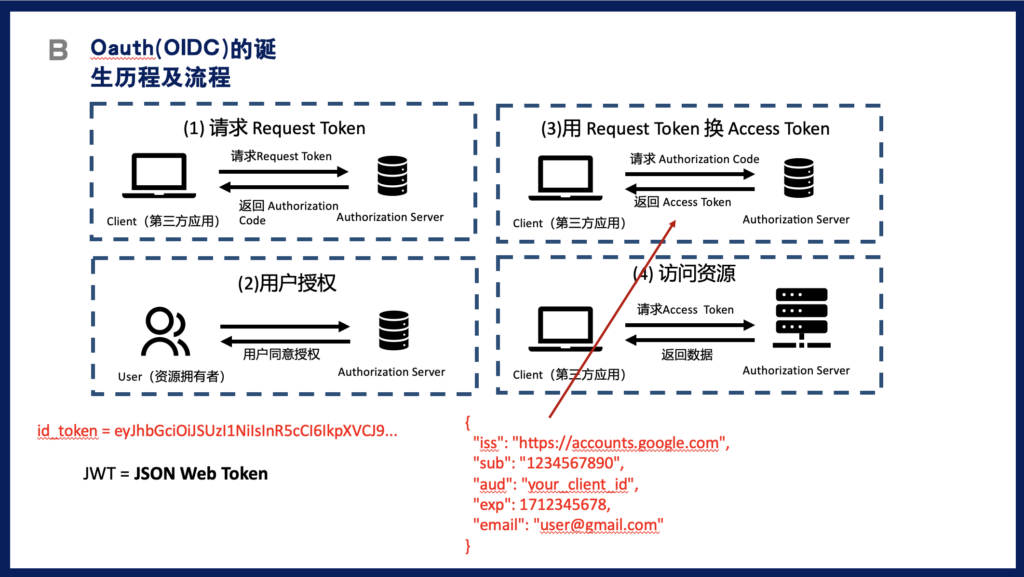

OpenID Connect的诞生标志着业界对”授权”和”认证”概念区分的成熟认识。OAuth 2.0虽然在授权方面取得了巨大成功,但它本质上解决的是”用户能做什么”而非”用户是谁”的问题。随着社交登录(Social Login)的普及,越来越多的应用希望使用Google、Facebook等账号作为统一身份认证入口,但OAuth 2.0缺乏标准化的用户身份信息表示方法,导致每个提供商都有自己的实现方式,增加了集成成本和安全风险。2014年,OpenID Foundation在OAuth 2.0基础上构建了一个身份层,通过引入ID Token(一个包含用户身份信息的JWT)、标准化的UserInfo端点、以及一套完整的发现和动态注册机制,实现了真正的联合身份认证。OIDC的成功不仅在于技术创新,更在于它建立了一个包括Google、Microsoft、Amazon等巨头在内的生态系统,使”使用XX账号登录”成为互联网的标准实践。

The birth of OpenID Connect marked the industry’s mature understanding of the distinction between “authorization” and “authentication” concepts. Although OAuth 2.0 achieved great success in authorization, it essentially solved the problem of “what the user can do” rather than “who the user is.” With the proliferation of social login, more and more applications wanted to use accounts from Google, Facebook, and others as unified identity authentication portals. However, OAuth 2.0 lacked standardized methods for representing user identity information, causing each provider to have its own implementation, increasing integration costs and security risks. In 2014, the OpenID Foundation built an identity layer on top of OAuth 2.0, achieving true federated identity authentication by introducing ID Token (a JWT containing user identity information), standardized UserInfo endpoints, and a complete set of discovery and dynamic registration mechanisms. OIDC’s success lies not only in technological innovation but also in establishing an ecosystem including giants like Google, Microsoft, and Amazon, making “login with XX account” a standard practice on the Internet.

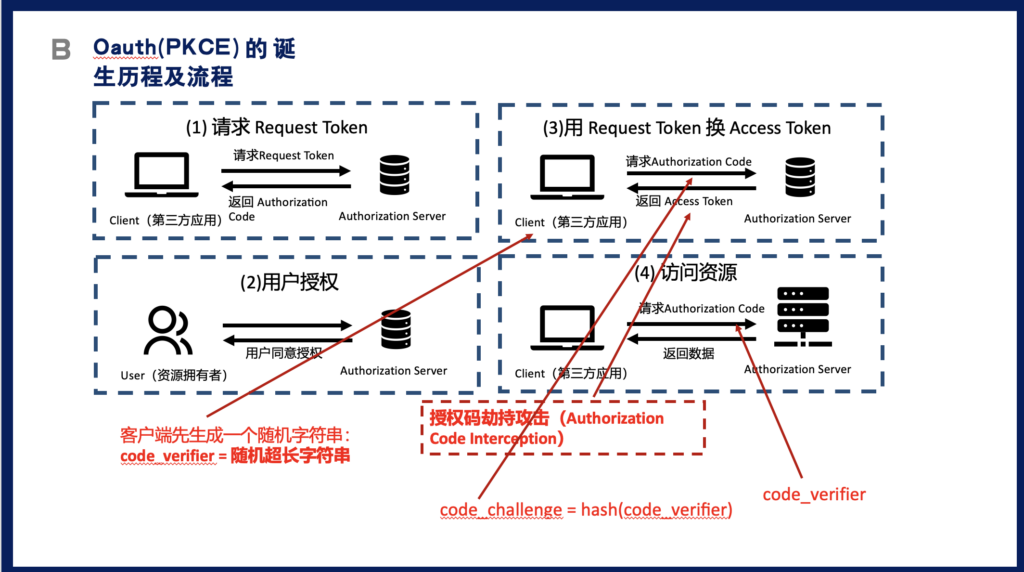

PKCE的引入代表了OAuth安全机制对移动互联网时代新威胁的积极响应。随着智能手机的普及和单页应用(SPA)的兴起,公共客户端(无法安全存储客户端密钥的应用)成为主流,传统的授权码流程面临新的安全挑战。在移动应用中,恶意应用可以通过注册相同的URL Scheme来拦截授权码;在SPA中,所有代码都暴露在浏览器中,无法保护客户端密钥。2015年,IETF发布RFC 7636定义了PKCE机制,通过在客户端生成动态的验证器对(code_verifier和code_challenge),利用SHA256哈希的单向性来防止授权码被窃取后的滥用。PKCE的巧妙之处在于它不需要客户端存储长期密钥,每次授权流程都使用新的验证器,即使攻击者截获了授权码,没有对应的verifier也无法换取访问令牌。2020年后,随着OAuth 2.1的推进,PKCE从公共客户端的可选机制升级为所有客户端的强制要求,体现了”安全默认”的设计理念转变。

The introduction of PKCE represents OAuth security mechanisms’ proactive response to new threats in the mobile Internet era. With the proliferation of smartphones and the rise of single-page applications (SPAs), public clients (applications that cannot securely store client keys) became mainstream, and traditional authorization code flows faced new security challenges. In mobile applications, malicious apps could intercept authorization codes by registering the same URL Scheme; in SPAs, all code is exposed in the browser, unable to protect client keys. In 2015, the IETF released RFC 7636 defining the PKCE mechanism, preventing the abuse of stolen authorization codes through dynamically generated verifier pairs (code_verifier and code_challenge) on the client side, utilizing the one-way property of SHA256 hashing. The brilliance of PKCE lies in that it doesn’t require clients to store long-term keys; each authorization flow uses new verifiers. Even if attackers intercept the authorization code, they cannot exchange it for access tokens without the corresponding verifier. After 2020, with the advancement of OAuth 2.1, PKCE upgraded from an optional mechanism for public clients to a mandatory requirement for all clients, reflecting the design philosophy shift toward “secure by default.”

演进时间线 (Evolution Timeline)

| 年份/日期 Year/Date | 里程碑事件 Milestone | 技术焦点 Technical Focus | 证据引用 Evidence |

|---|---|---|---|

| 2007年12月 December 2007 | OAuth 1.0草案在OAuth Summit上首次提出,Twitter、Google、Yahoo等公司参与制定 OAuth 1.0 draft first proposed at OAuth Summit with participation from Twitter, Google, Yahoo | OAuth 1.0: 基于签名的授权机制,解决密码共享问题 Signature-based authorization mechanism, solving password sharing | [1] Hammer-Lahav, E. (2007). OAuth Core 1.0. OAuth Community. |

| 2010年4月 April 2010 | OAuth 2.0首个草案发布,Facebook、Microsoft加入标准制定 First OAuth 2.0 draft released, Facebook and Microsoft join standardization | OAuth 2.0: 简化协议,依赖HTTPS,支持多种授权流程 Simplified protocol, HTTPS-dependent, multiple grant types | [2] Hardt, D. (2012). The OAuth 2.0 Authorization Framework. RFC 6749. |

| 2014年2月 February 2014 | OpenID Connect 1.0正式发布,Google成为首批实施者 OpenID Connect 1.0 officially released, Google among first implementers | OIDC: 在OAuth 2.0上增加身份层,标准化用户信息 Identity layer on OAuth 2.0, standardized user info | [3] Sakimura, N., et al. (2014). OpenID Connect Core 1.0. |

| 2015年9月 September 2015 | RFC 7636发布PKCE规范,针对移动应用安全 RFC 7636 releases PKCE specification for mobile app security | PKCE早期: 公共客户端的授权码保护 Early PKCE: Authorization code protection for public clients | [4] Sakimura, N., et al. (2015). Proof Key for Code Exchange. RFC 7636. |

| 2020年8月 August 2020 | OAuth 2.0安全最佳实践发布,PKCE成为推荐标准 OAuth 2.0 Security BCP published, PKCE becomes recommended | PKCE普及: 从可选到推荐的转变 PKCE adoption: Shift from optional to recommended | [5] Lodderstedt, T., et al. (2020). OAuth 2.0 Security Best Current Practice. |

| 2021年8月 August 2021 | Google强制所有OAuth客户端使用PKCE Google mandates PKCE for all OAuth clients | PKCE强制化: 大规模产业实施 PKCE enforcement: Large-scale industry implementation | [6] Google Identity Platform. (2021). PKCE Migration Guide. |

| 2024年10月 October 2024 | OAuth 2.1草案将PKCE列为所有客户端强制要求 OAuth 2.1 draft makes PKCE mandatory for all clients | OAuth 2.1: 整合所有安全最佳实践 Integrating all security best practices | [7] Parecki, A., & Waite, D. (2024). OAuth 2.1 Authorization Framework. |

5. 工作原理 (How It Works)

5.1 系统模型与基础假设 (System Model and Assumptions)

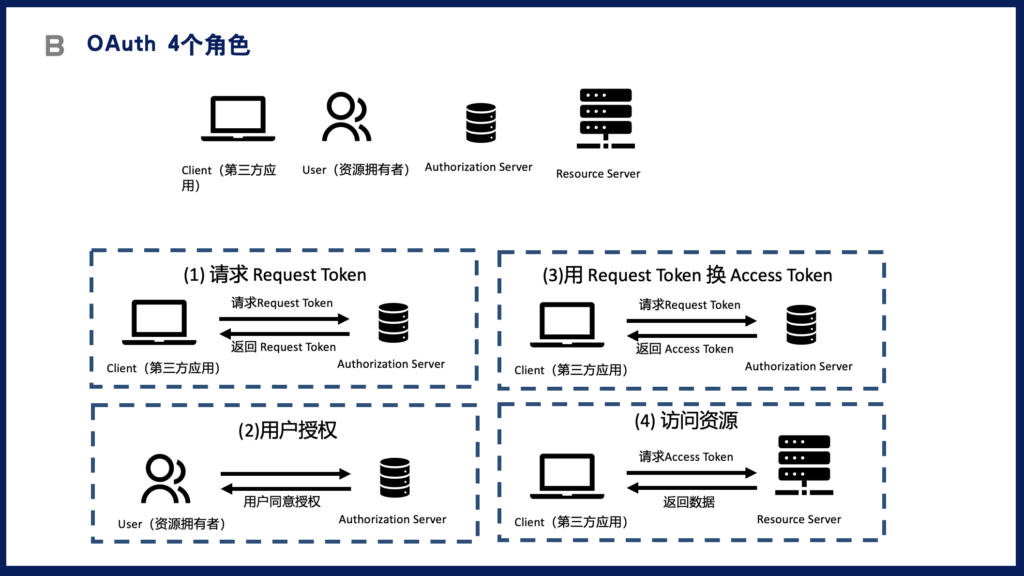

OAuth框架的系统架构在其演进过程中保持了相对稳定的四角色模型,但每个阶段的实现机制和安全假设都有显著差异。资源拥有者(Resource Owner)是拥有受保护资源的终端用户,在整个演进历程中始终保持着最终授权决策权;客户端(Client)是代表资源拥有者请求访问受保护资源的应用程序,从OAuth 1.0的可信应用演变到OAuth 2.0的分类管理(机密客户端与公共客户端),再到PKCE时代的零信任假设;授权服务器(Authorization Server)负责验证资源拥有者身份并颁发访问凭证,从OAuth 1.0的请求令牌(Request Token)和访问令牌(Access Token)双令牌机制,演进到OAuth 2.0的授权码(Authorization Code)和访问令牌分离,OIDC增加了ID Token作为身份凭证,PKCE则在授权码交换阶段增加了额外的验证机制;资源服务器(Resource Server)托管受保护资源并验证访问请求的合法性,从OAuth 1.0的每请求签名验证,简化到OAuth 2.0的Bearer Token验证,OIDC时代可以通过JWT自包含验证,而PKCE主要影响令牌获取而非使用阶段。

The system architecture of the OAuth framework has maintained a relatively stable four-role model throughout its evolution, but the implementation mechanisms and security assumptions at each stage differ significantly. The Resource Owner, the end user who owns protected resources, has consistently maintained ultimate authorization decision power throughout the evolution; the Client, the application requesting access to protected resources on behalf of the resource owner, evolved from trusted applications in OAuth 1.0 to classified management (confidential and public clients) in OAuth 2.0, and then to zero-trust assumptions in the PKCE era; the Authorization Server, responsible for verifying resource owner identity and issuing access credentials, evolved from OAuth 1.0’s dual-token mechanism of Request Token and Access Token, to OAuth 2.0’s separation of Authorization Code and Access Token, with OIDC adding ID Token as identity credentials, and PKCE adding additional verification mechanisms during authorization code exchange; the Resource Server, hosting protected resources and verifying the legitimacy of access requests, simplified from OAuth 1.0’s per-request signature verification to OAuth 2.0’s Bearer Token verification, with self-contained JWT verification possible in the OIDC era, while PKCE primarily affects token acquisition rather than the usage phase.

信任模型的演变反映了互联网安全威胁景观的变化和密码学技术的进步。OAuth 1.0建立在一个相对理想化的信任模型上:假设网络是不可信的(因此需要签名),但客户端是可信的(能够安全保管密钥),这种模型适合早期的服务器端应用但难以适应移动和前端应用的兴起。OAuth 2.0采用了更加灵活的信任模型:通过强制HTTPS解决传输安全问题,通过客户端分类(机密客户端vs公共客户端)和不同的授权流程来适应不同的信任级别,这种设计极大地扩展了OAuth的适用范围但也引入了新的攻击面。OIDC在OAuth 2.0的基础上增加了身份信任链:通过数字签名的ID Token建立了从授权服务器到客户端的可验证身份传递机制,使用非对称加密确保即使在不信任的网络环境中也能验证身份声明的真实性。PKCE的引入标志着向零信任架构的转变:不再假设客户端能够保守秘密,而是通过动态生成的验证器和密码学挑战-响应机制来证明请求的合法性,这种设计理念与现代云原生应用的安全要求高度契合。

The evolution of the trust model reflects changes in the Internet security threat landscape and advances in cryptographic technology. OAuth 1.0 was built on a relatively idealized trust model: assuming the network is untrusted (hence requiring signatures) but clients are trusted (able to securely maintain keys). This model suited early server-side applications but struggled to adapt to the rise of mobile and frontend applications. OAuth 2.0 adopted a more flexible trust model: solving transport security through mandatory HTTPS, adapting to different trust levels through client classification (confidential vs. public clients) and different authorization flows. This design greatly expanded OAuth’s applicability but also introduced new attack surfaces. OIDC added an identity trust chain on top of OAuth 2.0: establishing a verifiable identity transfer mechanism from authorization server to client through digitally signed ID Tokens, using asymmetric encryption to ensure the authenticity of identity claims can be verified even in untrusted network environments. The introduction of PKCE marked a shift toward zero-trust architecture: no longer assuming clients can keep secrets, but proving request legitimacy through dynamically generated verifiers and cryptographic challenge-response mechanisms. This design philosophy highly aligns with the security requirements of modern cloud-native applications.

密码学基础的应用展现了从重量级到轻量级、从静态到动态的演进趋势。OAuth 1.0严重依赖数字签名技术,每个API请求都需要使用HMAC-SHA1或RSA-SHA1算法计算签名,签名的输入包括HTTP方法、URL、所有参数、时间戳和随机数,这种方法提供了强大的完整性和真实性保证,但实现复杂度高且容易出错。OAuth 2.0大幅简化了密码学要求,主要依赖TLS/SSL提供的传输层加密,应用层仅使用简单的Bearer Token,这种设计降低了实现门槛但也意味着令牌一旦泄露就可能被滥用。OIDC重新引入了密码学机制但采用了更现代的方法:使用JWT(JSON Web Token)作为ID Token的格式,支持RS256(RSA签名)、ES256(椭圆曲线签名)等算法,既保证了安全性又提供了良好的互操作性。PKCE的密码学设计极其精巧:仅使用SHA256哈希函数和BASE64URL编码,通过code_verifier(43-128字符的随机字符串)和code_challenge(verifier的SHA256哈希)的挑战-响应对,在不增加密钥管理负担的情况下实现了强大的安全保护,这种设计充分体现了”简单但安全”的现代密码学理念。

The application of cryptographic foundations shows an evolutionary trend from heavyweight to lightweight, from static to dynamic. OAuth 1.0 heavily relied on digital signature technology, requiring signature calculation using HMAC-SHA1 or RSA-SHA1 algorithms for each API request. The signature input included HTTP method, URL, all parameters, timestamp, and nonce. This method provided strong integrity and authenticity guarantees but had high implementation complexity and was error-prone. OAuth 2.0 significantly simplified cryptographic requirements, mainly relying on transport layer encryption provided by TLS/SSL, with only simple Bearer Tokens at the application layer. This design lowered the implementation threshold but also meant tokens could be abused once leaked. OIDC reintroduced cryptographic mechanisms but adopted more modern methods: using JWT (JSON Web Token) as the ID Token format, supporting algorithms like RS256 (RSA signature) and ES256 (elliptic curve signature), ensuring both security and good interoperability. PKCE’s cryptographic design is extremely elegant: using only SHA256 hash function and BASE64URL encoding, achieving strong security protection through the challenge-response pair of code_verifier (43-128 character random string) and code_challenge (SHA256 hash of verifier) without adding key management burden. This design fully embodies the modern cryptographic philosophy of “simple but secure.”

5.2 协议工作流程 (Protocol Workflow)

Figure 1. OAuth演进:从OAuth 1.0到PKCE的完整流程对比

Complete Flow Comparison from OAuth 1.0 to PKCE

┌─────────────────────────────────────────────────────────────────────────────────────────┐

│ OAuth 1.0 (2007-2010) - 签名时代 Signature Era │

├─────────────────────────────────────────────────────────────────────────────────────────┤

│ Client Authorization Server Resource Server │

│ │ │ │ │

│ │───① Get Request Token─────────►│ │ │

│ │ (with signature) │ │ │

│ │◄──────Request Token────────────│ │ │

│ │ │ │ │

│ │───② Redirect to Authorize─────►│ │ │

│ │ │◄─── User Authorization ───► │ │

│ │◄──────Authorized───────────────│ │ │

│ │ │ │ │

│ │───③ Exchange for Access Token─►│ │ │

│ │ (with signature) │ │ │

│ │◄──────Access Token─────────────│ │ │

│ │ │ │ │

│ │───④ Access Resource──────────────────────────────────────────►│ │

│ │ (每次请求都要签名 Sign every request) │ │

│ │◄───────────────────Protected Resource─────────────────────────│ │

└─────────────────────────────────────────────────────────────────────────────────────────┘

┌─────────────────────────────────────────────────────────────────────────────────────────┐

│ OAuth 2.0 (2010-2014) - 简化时代 Simplification Era │

├─────────────────────────────────────────────────────────────────────────────────────────┤

│ Client Authorization Server Resource Server │

│ │ │ │ │

│ │───① Authorization Request─────►│ │ │

│ │ (通过HTTPS Through HTTPS) │ │ │

│ │ │◄─── User Login & Consent ───► │ │

│ │◄──② Authorization Code────────│ │ │

│ │ │ │ │

│ │───③ Token Request─────────────►│ │ │

│ │ POST /token │ │ │

│ │ {code, client_id, secret} │ │ │

│ │◄──────Access Token─────────────│ │ │

│ │ (Bearer Token) │ │ │

│ │ │ │ │

│ │───④ Access Resource──────────────────────────────────────────►│ │

│ │ Authorization: Bearer {token} │ │

│ │◄───────────────────Protected Resource─────────────────────────│ │

└─────────────────────────────────────────────────────────────────────────────────────────┘

┌─────────────────────────────────────────────────────────────────────────────────────────┐

│ OpenID Connect (2014-2020) - 身份时代 Identity Era │

├─────────────────────────────────────────────────────────────────────────────────────────┤

│ Client Authorization Server Resource Server │

│ │ │ │ │

│ │───① Authorization Request─────►│ │ │

│ │ scope=openid profile email │ │ │

│ │ │◄─── User Authentication ───► │ │

│ │◄──② Authorization Code────────│ │ │

│ │ │ │ │

│ │───③ Token Request─────────────►│ │ │

│ │◄──────Token Response───────────│ │ │

│ │ { │ │ │

│ │ access_token: "...", │ │ │

│ │ id_token: "JWT with user info" ←── 新增 ID Token (JWT) │ │

│ │ } │ │ │

│ │ │ │ │

│ │ ┌─────────────────────────┐ │ │ │

│ │ │验证ID Token (JWT签名) │ │ │ │

│ │ │获取用户身份信息 │ │ │ │

│ │ └─────────────────────────┘ │ │ │

│ │ │ │ │

│ │───④ Access UserInfo Endpoint─────────────────────────────────►│ │

│ │◄───────────────────User Profile───────────────────────────────│ │

└─────────────────────────────────────────────────────────────────────────────────────────┘

┌─────────────────────────────────────────────────────────────────────────────────────────┐

│ PKCE (2020+) - 零信任时代 Zero Trust Era │

├─────────────────────────────────────────────────────────────────────────────────────────┤

│ Client (SPA/Mobile) Authorization Server Resource Server │

│ │ │ │ │

│ │ ┌─────────────────────────┐ │ │ │

│ │ │生成PKCE密钥对 │ │ │ │

│ │ │verifier = random(43-128) │ │ │ │

│ │ │challenge = SHA256(verifier)│ │ │ │

│ │ └─────────────────────────┘ │ │ │

│ │ │ │ │

│ │───① Authorization Request─────►│ │ │

│ │ &code_challenge={challenge} │ 存储 Store: │ │

│ │ &code_challenge_method=S256 │ code ↔ challenge │ │

│ │ │ │ │

│ │◄──② Authorization Code────────│ │ │

│ │ │ │ │

│ │───③ Token Request─────────────►│ │ │

│ │ { │ 验证 Verify: │ │

│ │ code: "...", │ SHA256(verifier) == challenge │ │

│ │ code_verifier: {verifier} │ │ │

│ │ } │ │ │

│ │◄──────Access Token─────────────│ │ │

│ │ │ │ │

│ │───④ Access Resource──────────────────────────────────────────►│ │

│ │◄───────────────────Protected Resource─────────────────────────│ │

│ │ │ │ │

│ │ 攻击者即使截获code也无法使用 │ │ │

│ │ Attacker cannot use intercepted code │ │

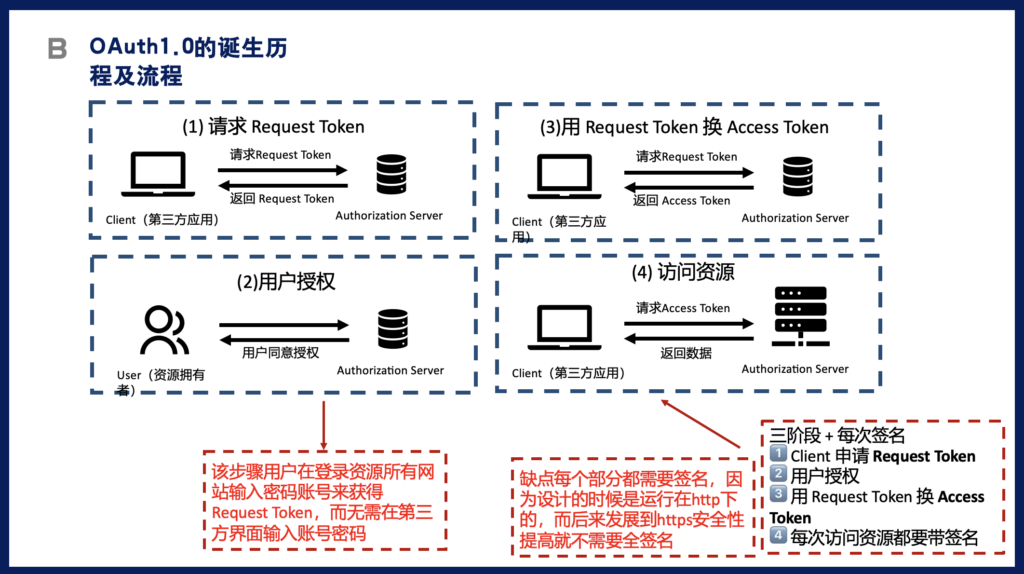

└─────────────────────────────────────────────────────────────────────────────────────────┘OAuth 1.0的工作流程体现了基于签名的安全哲学,其核心在于通过密码学签名确保每个请求的完整性和不可否认性。整个流程分为三个主要阶段,每个阶段都需要复杂的签名计算。首先,客户端需要获取临时凭证(Request Token),这个请求必须包含oauth_consumer_key、oauth_signature_method、oauth_timestamp、oauth_nonce等参数,并使用客户端密钥和令牌密钥(此时为空)计算签名。授权服务器验证签名后返回oauth_token和oauth_token_secret。第二阶段,客户端将用户重定向到授权服务器,用户完成身份验证和授权后,授权服务器将用户重定向回客户端,并附带oauth_verifier参数。第三阶段,客户端使用Request Token、oauth_verifier和新的签名请求Access Token,此时的签名计算需要包含Request Token Secret。最终获得Access Token后,客户端在访问资源时必须为每个请求计算签名,签名的基础字符串包含HTTP方法、规范化的URL和所有参数的规范化字符串。这种设计虽然提供了强大的安全保证,但实现复杂度极高,特别是签名基础字符串的构建和参数规范化过程容易出错,导致互操作性问题频发。

The OAuth 1.0 workflow embodies a signature-based security philosophy, with its core ensuring the integrity and non-repudiation of each request through cryptographic signatures. The entire process is divided into three main phases, each requiring complex signature calculations. First, the client needs to obtain temporary credentials (Request Token). This request must include parameters like oauth_consumer_key, oauth_signature_method, oauth_timestamp, oauth_nonce, and calculate a signature using the client key and token key (empty at this point). After the authorization server verifies the signature, it returns oauth_token and oauth_token_secret. In the second phase, the client redirects the user to the authorization server. After the user completes authentication and authorization, the authorization server redirects the user back to the client with an oauth_verifier parameter. In the third phase, the client uses the Request Token, oauth_verifier, and a new signature to request the Access Token. The signature calculation at this point needs to include the Request Token Secret. After finally obtaining the Access Token, the client must calculate a signature for each request when accessing resources. The signature base string includes the HTTP method, normalized URL, and normalized string of all parameters. While this design provided strong security guarantees, the implementation complexity was extremely high. The construction of signature base strings and parameter normalization processes were particularly error-prone, leading to frequent interoperability issues.

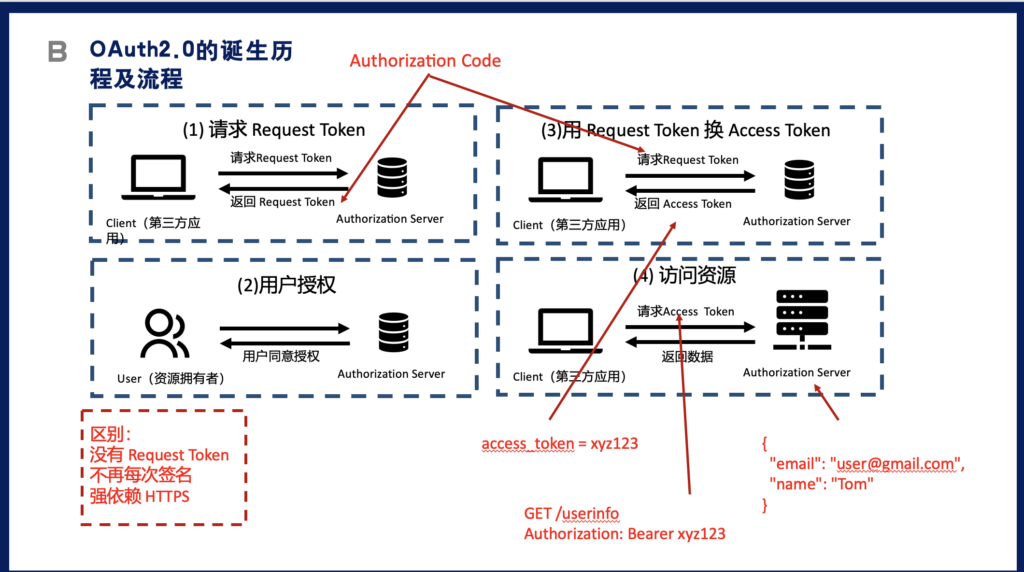

OAuth 2.0的工作流程通过简化和模块化实现了更好的可用性和灵活性。以最常用的授权码流程(Authorization Code Flow)为例,整个过程被简化为四个清晰的步骤。客户端首先构造授权请求,将用户重定向到授权服务器的/authorize端点,请求中包含response_type=code、client_id、redirect_uri、scope和state参数。与OAuth 1.0不同,这个请求不需要签名,安全性通过HTTPS和state参数(防CSRF)来保证。用户在授权服务器完成身份验证和授权同意后,授权服务器生成一个短期的授权码(通常有效期为10分钟),并通过redirect_uri将用户重定向回客户端。客户端收到授权码后,向授权服务器的/token端点发送POST请求,包含grant_type=authorization_code、code、redirect_uri、client_id和client_secret(对于机密客户端)。授权服务器验证这些参数后,返回access_token、token_type(通常是”Bearer”)、expires_in、refresh_token(可选)等信息。最后,客户端使用Bearer Token访问资源服务器,只需在Authorization头部添加”Bearer {token}”即可,不需要复杂的签名计算。这种设计极大地降低了实现难度,使OAuth 2.0迅速成为行业标准。

The OAuth 2.0 workflow achieved better usability and flexibility through simplification and modularization. Taking the most commonly used Authorization Code Flow as an example, the entire process is simplified into four clear steps. The client first constructs an authorization request, redirecting the user to the authorization server’s /authorize endpoint, with the request including response_type=code, client_id, redirect_uri, scope, and state parameters. Unlike OAuth 1.0, this request doesn’t require a signature; security is ensured through HTTPS and the state parameter (CSRF prevention). After the user completes authentication and authorization consent at the authorization server, the authorization server generates a short-term authorization code (typically valid for 10 minutes) and redirects the user back to the client through the redirect_uri. After receiving the authorization code, the client sends a POST request to the authorization server’s /token endpoint, including grant_type=authorization_code, code, redirect_uri, client_id, and client_secret (for confidential clients). After the authorization server verifies these parameters, it returns access_token, token_type (usually “Bearer”), expires_in, refresh_token (optional), and other information. Finally, the client accesses the resource server using the Bearer Token, simply adding “Bearer {token}” to the Authorization header without complex signature calculations. This design greatly reduced implementation difficulty, making OAuth 2.0 quickly become the industry standard.

OpenID Connect在OAuth 2.0的基础上增加了身份认证层,其工作流程在保持OAuth 2.0简洁性的同时提供了标准化的用户身份信息。OIDC的核心创新是引入了ID Token,这是一个包含用户身份信息的JWT(JSON Web Token)。在授权请求阶段,客户端需要在scope参数中包含”openid”,还可以包含”profile”、”email”、”address”、”phone”等标准scope来请求特定的用户信息。授权服务器在用户同意后,除了返回授权码外,在令牌响应中还会返回ID Token。ID Token包含标准的JWT头部(指定签名算法)、载荷(包含iss发行者、sub主体标识、aud受众、exp过期时间、iat颁发时间等标准声明,以及可选的用户信息如name、email等)和签名。客户端收到ID Token后,必须验证其签名(使用授权服务器的公钥)、检查iss和aud声明是否正确、验证exp确保令牌未过期、如果存在nonce则验证其值。除了ID Token,OIDC还定义了UserInfo端点,客户端可以使用Access Token调用此端点获取更详细的用户信息。这种设计使得OIDC既可以用于简单的身份验证(仅使用ID Token),也可以用于需要详细用户信息的场景(结合UserInfo端点),极大地提升了灵活性。

OpenID Connect added an identity authentication layer on top of OAuth 2.0, with its workflow maintaining OAuth 2.0’s simplicity while providing standardized user identity information. OIDC’s core innovation is the introduction of ID Token, a JWT (JSON Web Token) containing user identity information. During the authorization request phase, the client needs to include “openid” in the scope parameter, and can also include standard scopes like “profile”, “email”, “address”, “phone” to request specific user information. After user consent, the authorization server returns an ID Token in the token response in addition to the authorization code. The ID Token contains a standard JWT header (specifying the signature algorithm), payload (including standard claims like iss issuer, sub subject identifier, aud audience, exp expiration time, iat issued at time, and optional user information like name, email), and signature. After receiving the ID Token, the client must verify its signature (using the authorization server’s public key), check if iss and aud claims are correct, verify exp to ensure the token hasn’t expired, and validate the nonce value if present. Besides ID Token, OIDC also defines a UserInfo endpoint that clients can call using the Access Token to obtain more detailed user information. This design allows OIDC to be used for both simple identity verification (using only ID Token) and scenarios requiring detailed user information (combined with UserInfo endpoint), greatly enhancing flexibility.

PKCE的工作流程在不改变OAuth 2.0基本结构的前提下,通过巧妙的密码学机制解决了公共客户端的安全问题。PKCE的核心是在客户端动态生成一对验证值:code_verifier和code_challenge。在授权流程开始前,客户端生成一个高熵的随机字符串作为code_verifier,长度在43到128个字符之间,使用URL安全的字符集(A-Z、a-z、0-9、-、.、_、~)。然后计算code_challenge = BASE64URL(SHA256(code_verifier)),这个单向转换确保即使challenge被截获也无法反推出verifier。在授权请求中,客户端除了标准的OAuth 2.0参数外,还需要添加code_challenge和code_challenge_method(值为”S256″表示使用SHA256,或”plain”表示不转换,但强烈不推荐)参数。授权服务器接收到请求后,将code_challenge与即将颁发的授权码关联存储。当客户端使用授权码请求访问令牌时,必须在请求中包含原始的code_verifier。授权服务器收到令牌请求后,重新计算SHA256(received_verifier)并与存储的challenge进行比较,只有匹配时才颁发令牌。这种机制的精妙之处在于:即使攻击者通过恶意应用拦截了授权码,由于没有原始的code_verifier(它从未在网络上传输),攻击者无法完成令牌交换。同时,由于每次授权都使用新的verifier/challenge对,即使一个流程被破解也不会影响其他流程的安全性。

The PKCE workflow solves security issues for public clients through clever cryptographic mechanisms without changing the basic structure of OAuth 2.0. The core of PKCE is dynamically generating a pair of verification values on the client: code_verifier and code_challenge. Before the authorization flow begins, the client generates a high-entropy random string as code_verifier, between 43 and 128 characters long, using a URL-safe character set (A-Z, a-z, 0-9, -, ., _, ~). It then calculates code_challenge = BASE64URL(SHA256(code_verifier)). This one-way transformation ensures that even if the challenge is intercepted, the verifier cannot be derived. In the authorization request, besides standard OAuth 2.0 parameters, the client needs to add code_challenge and code_challenge_method (value “S256” for SHA256, or “plain” for no transformation, though strongly discouraged). After receiving the request, the authorization server stores the code_challenge associated with the authorization code to be issued. When the client uses the authorization code to request an access token, it must include the original code_verifier in the request. After receiving the token request, the authorization server recalculates SHA256(received_verifier) and compares it with the stored challenge, issuing the token only if they match. The brilliance of this mechanism lies in: even if attackers intercept the authorization code through malicious applications, without the original code_verifier (which is never transmitted over the network), attackers cannot complete the token exchange. Meanwhile, since each authorization uses a new verifier/challenge pair, even if one flow is compromised, it doesn’t affect the security of other flows.

6. 威胁模型与安全属性 (Threat Model & Security Properties)

6.1 威胁模型分析 (Threat Model Analysis)

OAuth框架在其演进过程中面对的威胁模型经历了从简单到复杂、从单一到多维的演变。在OAuth 1.0时代,主要威胁集中在网络层面的中间人攻击和重放攻击。由于当时HTTPS尚未普及且实施成本高昂,协议设计者必须假设所有网络通信都可能被窃听或篡改。攻击者被假定能够:监听客户端与服务器之间的所有通信、修改或注入网络请求、重放之前捕获的合法请求。OAuth 1.0通过强制签名机制来对抗这些威胁,每个请求都包含时间戳(oauth_timestamp)和随机数(oauth_nonce),服务器通过验证签名的完整性、检查时间戳的新鲜度(通常允许5分钟的时钟偏差)、记录并拒绝重复的nonce来防御攻击。然而,这种设计也引入了新的攻击向量:会话固定攻击(通过预先设定Request Token来劫持授权流程)、签名算法降级攻击(强制使用较弱的签名算法)。实践中还发现,许多实现错误地处理了签名验证,特别是在参数编码和规范化方面的差异导致了安全漏洞。

The threat model faced by the OAuth framework throughout its evolution has evolved from simple to complex, from single-dimensional to multi-dimensional. In the OAuth 1.0 era, main threats focused on network-level man-in-the-middle attacks and replay attacks. Since HTTPS was not yet widespread and implementation costs were high, protocol designers had to assume all network communications could be eavesdropped or tampered with. Attackers were assumed able to: monitor all communications between client and server, modify or inject network requests, and replay previously captured legitimate requests. OAuth 1.0 countered these threats through mandatory signature mechanisms, with each request containing timestamp (oauth_timestamp) and nonce (oauth_nonce). Servers defended against attacks by verifying signature integrity, checking timestamp freshness (typically allowing 5-minute clock skew), and recording and rejecting duplicate nonces. However, this design also introduced new attack vectors: session fixation attacks (hijacking authorization flow by presetting Request Token) and signature algorithm downgrade attacks (forcing weaker signature algorithms). In practice, many implementations incorrectly handled signature verification, particularly differences in parameter encoding and normalization leading to security vulnerabilities.

OAuth 2.0时代的威胁模型变得更加复杂和多样化,反映了应用架构从传统服务器端向移动和单页应用转变带来的新挑战。授权码拦截攻击成为最突出的威胁,特别是在移动平台上,恶意应用可以通过注册相同的URL Scheme来截获合法应用的授权码。研究表明,在Android平台上,任何应用都可以声明自定义scheme,而iOS虽然有一定的保护机制但仍存在漏洞。跨站请求伪造(CSRF)攻击在OAuth 2.0中也成为重要威胁,攻击者可能诱导用户点击精心构造的授权链接,将攻击者的账户绑定到受害者的客户端。令牌泄露威胁随着Bearer Token的使用而加剧,一旦Access Token被窃取(通过XSS、恶意浏览器扩展、不安全的存储等),攻击者就可以完全冒充用户。开放重定向攻击利用redirect_uri参数的验证不严格,将用户重定向到攻击者控制的站点。此外,还有混淆代理攻击(Confused Deputy),资源服务器可能被诱导使用为其他服务颁发的令牌。OAuth 2.0通过state参数防CSRF、严格的redirect_uri验证、短期令牌和刷新令牌分离等机制来缓解这些威胁,但实施的复杂性导致许多部署存在安全漏洞。

The threat model in the OAuth 2.0 era became more complex and diverse, reflecting new challenges brought by the shift in application architecture from traditional server-side to mobile and single-page applications. Authorization code interception attacks became the most prominent threat, especially on mobile platforms where malicious applications could intercept legitimate application authorization codes by registering the same URL Scheme. Research shows that on Android, any application can declare custom schemes, while iOS has some protection mechanisms but vulnerabilities still exist. Cross-Site Request Forgery (CSRF) attacks also became important threats in OAuth 2.0, where attackers might trick users into clicking carefully crafted authorization links, binding the attacker’s account to the victim’s client. Token leakage threats intensified with Bearer Token usage—once an Access Token is stolen (through XSS, malicious browser extensions, insecure storage, etc.), attackers can fully impersonate users. Open redirect attacks exploit lax redirect_uri parameter validation, redirecting users to attacker-controlled sites. Additionally, there are Confused Deputy attacks where resource servers might be tricked into using tokens issued for other services. OAuth 2.0 mitigates these threats through CSRF prevention via state parameter, strict redirect_uri validation, separation of short-term tokens and refresh tokens, but implementation complexity led to security vulnerabilities in many deployments.

OpenID Connect引入身份层后,威胁模型扩展到了身份欺骗和隐私泄露领域。ID Token作为自包含的身份凭证,如果签名验证不当,攻击者可能伪造身份信息。密钥混淆攻击成为新的威胁:如果客户端没有正确验证ID Token的签名或使用了错误的密钥,可能接受伪造的身份声明。算法混淆攻击利用JWT的alg头部,攻击者可能将算法设置为”none”或降级到较弱的算法。受众混淆攻击发生在多租户环境中,一个租户的ID Token可能被误用于另一个租户。隐私威胁也变得突出:ID Token中包含的用户信息可能在客户端日志、浏览器历史或其他不安全的地方泄露;UserInfo端点如果没有适当的访问控制,可能导致用户信息的过度暴露。时间攻击也是一个考虑因素:通过分析ID Token的iat(颁发时间)和auth_time(认证时间),攻击者可能推断用户的行为模式。OIDC通过强制签名验证、严格的受众检查、最小信息披露原则、以及支持加密的ID Token等机制来应对这些威胁。

With OpenID Connect introducing the identity layer, the threat model expanded into identity spoofing and privacy leakage domains. As self-contained identity credentials, ID Tokens could allow attackers to forge identity information if signature verification is improper. Key confusion attacks became new threats: if clients don’t properly verify ID Token signatures or use wrong keys, they might accept forged identity claims. Algorithm confusion attacks exploit JWT’s alg header—attackers might set the algorithm to “none” or downgrade to weaker algorithms. Audience confusion attacks occur in multi-tenant environments where one tenant’s ID Token might be misused for another tenant. Privacy threats also became prominent: user information in ID Tokens might leak in client logs, browser history, or other insecure places; UserInfo endpoints without proper access control might lead to excessive user information exposure. Timing attacks are also a consideration: by analyzing ID Token’s iat (issued at time) and auth_time (authentication time), attackers might infer user behavior patterns. OIDC addresses these threats through mandatory signature verification, strict audience checking, minimal information disclosure principles, and support for encrypted ID Tokens.

PKCE时代的威胁模型聚焦于移动和单页应用特有的安全挑战,特别是在零信任环境下的授权安全。应用冒充攻击是PKCE要解决的核心问题:在移动生态系统中,恶意应用可能冒充合法应用来截获授权码。即使使用了应用链接(Android App Links或iOS Universal Links),仍存在降级攻击的风险,攻击者可能强制使用自定义URL Scheme。浏览器中的威胁也很严重:恶意浏览器扩展可能注入JavaScript代码来窃取授权码或访问令牌;跨站脚本(XSS)攻击可能直接从内存或本地存储中提取敏感信息。PKCE还需要防御预计算攻击:如果code_verifier的熵不够(少于43个字符),攻击者可能通过预计算彩虹表来破解。实施错误也是重要的威胁来源:使用”plain”方法而非”S256″会完全破坏PKCE的安全性;code_verifier的重用会导致安全降级;不正确的BASE64URL编码可能导致验证失败或安全漏洞。侧信道攻击在某些场景下也需要考虑:通过时间分析可能推断verifier的特征;在共享环境中,verifier可能通过内存或缓存泄露。PKCE通过强制高熵随机数、单向哈希函数、一次性使用原则等机制来对抗这些威胁,但正确的实施仍然至关重要。

The threat model in the PKCE era focuses on security challenges specific to mobile and single-page applications, particularly authorization security in zero-trust environments. Application impersonation attacks are the core problem PKCE addresses: in mobile ecosystems, malicious applications might impersonate legitimate ones to intercept authorization codes. Even with app links (Android App Links or iOS Universal Links), downgrade attack risks exist where attackers might force custom URL Scheme usage. Browser threats are also serious: malicious browser extensions might inject JavaScript to steal authorization codes or access tokens; Cross-Site Scripting (XSS) attacks might directly extract sensitive information from memory or local storage. PKCE also needs to defend against precomputation attacks: if code_verifier entropy is insufficient (less than 43 characters), attackers might crack it through precomputed rainbow tables. Implementation errors are also important threat sources: using “plain” method instead of “S256” completely breaks PKCE security; code_verifier reuse leads to security degradation; incorrect BASE64URL encoding might cause validation failures or security vulnerabilities. Side-channel attacks also need consideration in certain scenarios: timing analysis might infer verifier characteristics; in shared environments, verifiers might leak through memory or cache. PKCE counters these threats through mandatory high-entropy random numbers, one-way hash functions, one-time use principles, but correct implementation remains crucial.

6.2 安全属性演进 (Security Properties Evolution)

OAuth框架的安全属性在其演进过程中不断强化和扩展,从OAuth 1.0的完整性保护到PKCE的全方位安全保障,体现了对不断变化的威胁环境的适应。完整性保护(Integrity Protection)是OAuth 1.0的核心安全属性,通过对每个请求进行HMAC-SHA1或RSA-SHA1签名,确保请求在传输过程中未被篡改。签名覆盖了所有关键参数,包括HTTP方法、URL、所有OAuth参数和请求参数,任何修改都会导致签名验证失败。然而,OAuth 2.0放弃了应用层的完整性保护,转而依赖TLS提供的传输层保护,这种设计权衡换取了更好的性能和更简单的实现,但也意味着在TLS终止点之后的内部网络中可能存在完整性风险。OIDC通过对ID Token进行数字签名部分恢复了完整性保护,确保身份信息不被篡改。PKCE则通过code_challenge和code_verifier的绑定关系,为授权码交换过程提供了完整性保证,即使授权码被截获,没有对应的verifier也无法使用。

The security properties of the OAuth framework have been continuously strengthened and expanded throughout its evolution, from OAuth 1.0’s integrity protection to PKCE’s comprehensive security guarantees, reflecting adaptation to the changing threat environment. Integrity Protection was the core security property of OAuth 1.0, ensuring requests were not tampered with during transmission through HMAC-SHA1 or RSA-SHA1 signatures for each request. Signatures covered all critical parameters including HTTP method, URL, all OAuth parameters and request parameters—any modification would cause signature verification failure. However, OAuth 2.0 abandoned application-layer integrity protection, relying instead on transport-layer protection provided by TLS. This design trade-off achieved better performance and simpler implementation but also meant potential integrity risks in internal networks after TLS termination. OIDC partially restored integrity protection through digital signatures on ID Tokens, ensuring identity information isn’t tampered with. PKCE provides integrity guarantees for the authorization code exchange process through the binding relationship between code_challenge and code_verifier—even if the authorization code is intercepted, it cannot be used without the corresponding verifier.

机密性保护(Confidentiality Protection)的实现方式在OAuth演进中发生了根本性变化。OAuth 1.0没有直接提供机密性保护,敏感信息如Token Secret通过带外方式传输,依赖底层传输的安全性。如果不使用HTTPS,Token和其他敏感信息可能被窃听。OAuth 2.0强制使用TLS,为所有通信提供机密性保护,但Bearer Token的设计意味着令牌本身成为了需要保护的高价值目标。OIDC增加了对ID Token加密的支持(JWE – JSON Web Encryption),除了签名(JWS)外还可以加密敏感的身份信息,提供端到端的机密性保护。PKCE通过确保code_verifier从不在网络上传输(只传输其哈希值code_challenge),为授权流程的关键密钥提供了机密性保护。这种演进反映了从依赖外部机制到内建机密性保护的转变。

Confidentiality Protection implementation underwent fundamental changes in OAuth evolution. OAuth 1.0 didn’t directly provide confidentiality protection; sensitive information like Token Secret was transmitted out-of-band, relying on underlying transport security. Without HTTPS, tokens and other sensitive information could be eavesdropped. OAuth 2.0 mandates TLS usage, providing confidentiality protection for all communications, but Bearer Token design means tokens themselves became high-value targets needing protection. OIDC added support for ID Token encryption (JWE – JSON Web Encryption), enabling encryption of sensitive identity information beyond signatures (JWS), providing end-to-end confidentiality protection. PKCE ensures code_verifier is never transmitted over the network (only its hash code_challenge is transmitted), providing confidentiality protection for critical keys in the authorization flow. This evolution reflects a shift from relying on external mechanisms to built-in confidentiality protection.

认证保证(Authentication Assurance)从OAuth 1.0的隐式认证演变到OIDC的显式身份验证。OAuth 1.0通过签名隐式地提供了客户端认证,每个签名请求都证明了客户端拥有相应的密钥。但这种认证是单向的,主要关注客户端对服务器的认证,而不是用户身份的认证。OAuth 2.0在客户端认证方面提供了更灵活的选项:机密客户端使用client_secret,公共客户端则没有认证机制,这种设计适应了不同的部署场景但也引入了安全风险。OIDC通过引入ID Token和标准化的身份验证流程,首次在OAuth框架中提供了标准化的用户认证机制。ID Token不仅包含用户标识(sub claim),还包含认证上下文信息如认证时间(auth_time)、认证方法(amr)、认证上下文类引用(acr),使得依赖方可以评估认证的强度。PKCE虽然不直接提供用户认证,但通过确保只有发起授权请求的客户端才能完成令牌交换,加强了客户端认证的安全性。

Authentication Assurance evolved from OAuth 1.0’s implicit authentication to OIDC’s explicit identity verification. OAuth 1.0 implicitly provided client authentication through signatures—each signed request proved the client possessed corresponding keys. But this authentication was one-way, mainly focusing on client-to-server authentication rather than user identity authentication. OAuth 2.0 provided more flexible options for client authentication: confidential clients use client_secret while public clients have no authentication mechanism. This design adapted to different deployment scenarios but also introduced security risks. OIDC, by introducing ID Token and standardized identity verification flows, first provided standardized user authentication mechanisms in the OAuth framework. ID Token contains not only user identifier (sub claim) but also authentication context information like authentication time (auth_time), authentication methods (amr), authentication context class reference (acr), allowing relying parties to assess authentication strength. While PKCE doesn’t directly provide user authentication, it strengthens client authentication security by ensuring only the client initiating the authorization request can complete token exchange.

授权粒度控制(Authorization Granularity Control)随着OAuth的演进变得越来越精细和灵活。OAuth 1.0的授权模型相对简单,通常是全有或全无的访问控制,缺乏细粒度的权限管理。OAuth 2.0引入了scope机制,允许客户端请求特定的权限集合,用户可以选择性地授予权限。标准scope如”read”、”write”使得资源访问控制更加精细。但scope的定义和解释由各个服务提供商自行决定,缺乏标准化。OIDC标准化了一些身份相关的scope(如”openid”、”profile”、”email”、”address”、”phone”),并定义了claims请求参数,允许更细粒度地请求特定的用户属性。PKCE本身不改变授权粒度,但通过提供更强的安全保证,使得服务提供商更愿意通过OAuth开放敏感资源的访问,间接促进了更丰富的授权场景。现代实践中,还出现了动态scope、增量授权、细粒度同意等高级特性,进一步提升了授权的灵活性和用户控制力。

Authorization Granularity Control became increasingly fine-grained and flexible with OAuth evolution. OAuth 1.0’s authorization model was relatively simple, typically all-or-nothing access control lacking fine-grained permission management. OAuth 2.0 introduced the scope mechanism, allowing clients to request specific permission sets with users selectively granting permissions. Standard scopes like “read”, “write” made resource access control more granular. But scope definition and interpretation were decided by individual service providers, lacking standardization. OIDC standardized some identity-related scopes (like “openid”, “profile”, “email”, “address”, “phone”) and defined claims request parameters, allowing more granular requests for specific user attributes. PKCE itself doesn’t change authorization granularity but by providing stronger security guarantees, makes service providers more willing to open sensitive resource access through OAuth, indirectly promoting richer authorization scenarios. In modern practice, advanced features like dynamic scopes, incremental authorization, fine-grained consent have emerged, further enhancing authorization flexibility and user control.

抗重放攻击(Replay Attack Resistance)能力在OAuth演进中采用了不同的实现策略。OAuth 1.0通过oauth_timestamp和oauth_nonce的组合来防止重放攻击,服务器需要记录一定时间窗口内的所有nonce值,拒绝重复的请求。这种机制虽然有效,但需要服务器维护状态,在分布式系统中实现复杂。OAuth 2.0主要依赖授权码的一次性使用和访问令牌的有效期来限制重放攻击的影响,但Bearer Token本身不具备抗重放能力,同一个令牌可以被多次使用直到过期。OIDC的ID Token包含iat(颁发时间)和exp(过期时间)声明,客户端可以通过检查这些时间戳来检测过期的令牌,但这不能防止有效期内的重放。nonce参数的引入为特定的流程(如隐式流程)提供了重放保护。PKCE通过确保每个授权流程使用唯一的code_verifier/code_challenge对,为授权码交换阶段提供了强大的抗重放保护,即使攻击者捕获了一个完整的授权流程,也无法重放它来获取新的令牌。

Replay Attack Resistance capabilities adopted different implementation strategies in OAuth evolution. OAuth 1.0 prevented replay attacks through the combination of oauth_timestamp and oauth_nonce—servers needed to record all nonce values within a certain time window, rejecting duplicate requests. While effective, this mechanism required servers to maintain state, making implementation complex in distributed systems. OAuth 2.0 mainly relied on one-time use of authorization codes and access token validity periods to limit replay attack impact, but Bearer Tokens themselves lack replay resistance—the same token can be used multiple times until expiration. OIDC’s ID Token includes iat (issued at) and exp (expiration) claims; clients can detect expired tokens by checking these timestamps, but this doesn’t prevent replay within validity periods. The introduction of nonce parameter provided replay protection for specific flows (like implicit flow). PKCE provides strong replay protection for the authorization code exchange phase by ensuring each authorization flow uses unique code_verifier/code_challenge pairs—even if attackers capture a complete authorization flow, they cannot replay it to obtain new tokens.

可撤销性(Revocability)作为一个关键的安全属性,其实现机制和粒度在OAuth演进中不断改进。OAuth 1.0的撤销机制相对原始,通常需要用户通过服务提供商的Web界面手动撤销访问权限,缺乏程序化的撤销接口。OAuth 2.0显著改进了撤销能力,RFC 7009定义了标准的令牌撤销端点,允许客户端主动撤销不再需要的访问令牌或刷新令牌。撤销可以是级联的(撤销刷新令牌会同时撤销相关的访问令牌)或独立的(仅撤销特定令牌)。但Bearer Token的无状态特性使得实时撤销具有挑战性,许多实现依赖短期令牌和定期刷新来限制撤销延迟。OIDC继承了OAuth 2.0的撤销机制,并通过会话管理规范提供了额外的撤销能力,包括单点登出(Single Logout)和会话管理端点。PKCE本身不改变撤销机制,但通过提供更强的安全保证,使得服务提供商可以颁发更长期的令牌(因为风险降低),从而减少了频繁刷新带来的性能开销,同时保持了良好的可撤销性。

Revocability as a key security property has seen continuous improvements in implementation mechanisms and granularity throughout OAuth evolution. OAuth 1.0’s revocation mechanism was relatively primitive, typically requiring users to manually revoke access through service providers’ web interfaces, lacking programmatic revocation interfaces. OAuth 2.0 significantly improved revocation capabilities—RFC 7009 defined standard token revocation endpoints, allowing clients to actively revoke no-longer-needed access tokens or refresh tokens. Revocation can be cascading (revoking refresh tokens simultaneously revokes related access tokens) or independent (revoking only specific tokens). But Bearer Token’s stateless nature makes real-time revocation challenging; many implementations rely on short-term tokens and periodic refresh to limit revocation delays. OIDC inherited OAuth 2.0’s revocation mechanism and provided additional revocation capabilities through session management specifications, including Single Logout and session management endpoints. PKCE itself doesn’t change revocation mechanisms but by providing stronger security guarantees, allows service providers to issue longer-term tokens (due to reduced risk), thus reducing performance overhead from frequent refreshes while maintaining good revocability.

6.3 已知漏洞与缓解措施 (Known Vulnerabilities and Mitigations)

OAuth生态系统在实际部署中暴露出的安全漏洞不仅推动了协议的演进,也为安全社区提供了宝贵的经验教训。OAuth 1.0时代的会话固定攻击是最早被发现的严重漏洞之一。攻击者首先获取一个Request Token,然后诱导受害者使用这个Token完成授权,最后攻击者使用该Token换取Access Token。这个漏洞的根源在于Request Token与用户会话的绑定不够严格。缓解措施包括在授权完成后验证Request Token的所有权、使用oauth_callback参数确保回调的真实性、以及在某些实现中引入oauth_verifier作为额外的验证因子。这个漏洞的发现直接影响了OAuth 2.0的设计,导致了授权码(Authorization Code)机制的引入,通过将授权码与客户端标识和重定向URI严格绑定来防止类似攻击。

Security vulnerabilities exposed in actual OAuth ecosystem deployments not only drove protocol evolution but also provided valuable lessons for the security community. Session fixation attacks in the OAuth 1.0 era were among the first serious vulnerabilities discovered. Attackers would first obtain a Request Token, then trick victims into completing authorization with this Token, finally using the Token to exchange for an Access Token. The root of this vulnerability was insufficient binding between Request Token and user session. Mitigations included verifying Request Token ownership after authorization completion, using oauth_callback parameter to ensure callback authenticity, and introducing oauth_verifier as an additional verification factor in some implementations. Discovery of this vulnerability directly influenced OAuth 2.0 design, leading to the introduction of Authorization Code mechanism, preventing similar attacks by strictly binding authorization codes to client identifiers and redirect URIs.

OAuth 2.0的授权码拦截漏洞在移动应用中尤其严重,成为推动PKCE标准化的主要动力。在移动操作系统中,任何应用都可以注册自定义URL Scheme(如myapp://),当多个应用注册相同scheme时,操作系统的行为是不确定的。攻击者可以创建恶意应用,注册与目标应用相同的scheme,从而截获授权码。实际案例包括2014年发现的多个流行Android应用存在此漏洞,影响数百万用户。即使使用了更安全的App Links(Android)或Universal Links(iOS),降级攻击仍然可能发生。PKCE通过要求客户端在授权请求时提供code_challenge,在令牌请求时提供code_verifier,确保只有原始请求方才能完成授权流程。这个机制的优雅之处在于它不需要预共享密钥,完全通过动态生成的密码学材料来实现安全保护。

Authorization code interception vulnerabilities in OAuth 2.0 were particularly severe in mobile applications, becoming the main driving force for PKCE standardization. In mobile operating systems, any application can register custom URL Schemes (like myapp://), and when multiple applications register the same scheme, OS behavior is undefined. Attackers can create malicious applications registering the same scheme as target applications, thereby intercepting authorization codes. Actual cases include multiple popular Android applications found vulnerable in 2014, affecting millions of users. Even with more secure App Links (Android) or Universal Links (iOS), downgrade attacks might still occur. PKCE ensures only the original requester can complete the authorization flow by requiring clients to provide code_challenge during authorization requests and code_verifier during token requests. The elegance of this mechanism is that it doesn’t require pre-shared keys, achieving security protection entirely through dynamically generated cryptographic material.

OIDC的算法混淆攻击展示了JWT使用中的微妙安全问题。攻击者通过操纵JWT头部的”alg”字段,可能导致严重的安全漏洞。最危险的是”none”算法攻击:攻击者将alg设置为”none”,移除签名部分,如果接收方没有正确验证算法类型就可能接受未签名的令牌。另一种攻击是RSA/HMAC混淆:攻击者获取RSA公钥,将其作为HMAC密钥来生成签名,如果服务器错误地使用公钥验证HMAC签名就会接受伪造的令牌。实际影响包括多个主流JWT库在2015-2017年间被发现存在这些漏洞。缓解措施包括:明确拒绝”none”算法、严格限制可接受的算法列表、将算法选择与密钥类型绑定、使用密钥标识(kid)来确定正确的验证密钥和算法。这些教训导致了JWT最佳实践的制定和安全库的改进。

Algorithm confusion attacks in OIDC demonstrated subtle security issues in JWT usage. Attackers manipulating the JWT header’s “alg” field could cause serious security vulnerabilities. Most dangerous is the “none” algorithm attack: attackers set alg to “none”, remove the signature part, and if recipients don’t properly verify algorithm type, they might accept unsigned tokens. Another attack is RSA/HMAC confusion: attackers obtain RSA public keys, use them as HMAC keys to generate signatures, and if servers incorrectly use public keys to verify HMAC signatures, they accept forged tokens. Actual impacts include multiple mainstream JWT libraries found vulnerable during 2015-2017. Mitigations include: explicitly rejecting “none” algorithm, strictly limiting acceptable algorithm lists, binding algorithm selection to key types, using key identifiers (kid) to determine correct verification keys and algorithms. These lessons led to JWT best practice formulation and security library improvements.

PKCE实施错误虽然协议设计良好,但实际部署中的错误仍可能导致安全漏洞。最常见的错误是使用”plain”方法而非”S256″:一些实现为了”简化”或”性能”考虑,直接传输code_verifier作为challenge,完全破坏了PKCE的安全性。code_verifier熵不足是另一个问题:规范要求43-128个字符,但一些实现使用较短的字符串,使得暴力破解成为可能。重用code_verifier的错误也时有发生:开发者可能错误地在多个授权流程中使用相同的verifier,导致安全降级。编码错误也很常见:BASE64URL编码的填充处理不当、字符集错误等都可能导致验证失败或安全漏洞。缓解这些问题需要:严格遵循RFC 7636规范、使用经过安全审计的库实现、在服务器端强制执行安全策略(如拒绝plain方法)、实施自动化安全测试、以及提供清晰的开发者文档和示例代码。

PKCE implementation errors, while the protocol is well-designed, can still lead to security vulnerabilities in actual deployments. The most common error is using “plain” method instead of “S256”: some implementations, for “simplification” or “performance” considerations, directly transmit code_verifier as challenge, completely breaking PKCE security. Insufficient code_verifier entropy is another issue: specifications require 43-128 characters, but some implementations use shorter strings, making brute force attacks possible. Code_verifier reuse errors also occur: developers might incorrectly use the same verifier across multiple authorization flows, causing security degradation. Encoding errors are also common: improper BASE64URL encoding padding handling, character set errors can all lead to validation failures or security vulnerabilities. Mitigating these issues requires: strictly following RFC 7636 specifications, using security-audited library implementations, enforcing security policies server-side (like rejecting plain method), implementing automated security testing, and providing clear developer documentation and example code.

跨站请求伪造(CSRF)攻击在OAuth 2.0早期部署中普遍存在,直到state参数的使用变得普及。攻击者构造恶意授权请求,诱导已登录用户点击,从而将攻击者的账户绑定到用户的客户端应用。这种攻击在社交媒体集成场景中特别危险,可能导致用户无意中在攻击者的账户下发布内容。2016年的研究发现,超过25%的OAuth实现没有正确使用state参数。缓解措施包括:强制使用不可预测的state参数、将state与用户会话绑定、在授权完成后验证state的一致性。OAuth 2.1将state参数从推荐升级为必需,反映了社区对这个问题严重性的认识。现代框架通常自动处理state的生成和验证,大大降低了实施错误的可能性。

Cross-Site Request Forgery (CSRF) attacks were prevalent in early OAuth 2.0 deployments until state parameter usage became widespread. Attackers construct malicious authorization requests, tricking logged-in users to click, thereby binding attacker accounts to users’ client applications. This attack is particularly dangerous in social media integration scenarios, potentially causing users to unknowingly post content under attacker accounts. 2016 research found over 25% of OAuth implementations didn’t properly use state parameters. Mitigations include: mandatory use of unpredictable state parameters, binding state to user sessions, verifying state consistency after authorization completion. OAuth 2.1 upgraded state parameter from recommended to required, reflecting community recognition of this issue’s severity. Modern frameworks typically handle state generation and verification automatically, greatly reducing implementation error possibilities.

参考文献 (References)

[1] Hammer-Lahav, E. (2007). “OAuth Core 1.0”. OAuth Community Specification. Retrieved from https://oauth.net/core/1.0/

[2] Hardt, D. (2012). “The OAuth 2.0 Authorization Framework”. Internet Engineering Task Force (IETF) RFC 6749. https://doi.org/10.17487/RFC6749

[3] Sakimura, N., Bradley, J., Jones, M., de Medeiros, B., & Mortimore, C. (2014). “OpenID Connect Core 1.0 incorporating errata set 1”. OpenID Foundation Specification. Retrieved from https://openid.net/specs/openid-connect-core-1_0.html

[4] Sakimura, N., Bradley, J., & Agarwal, N. (2015). “Proof Key for Code Exchange by OAuth Public Clients”. Internet Engineering Task Force (IETF) RFC 7636. https://doi.org/10.17487/RFC7636

[5] Lodderstedt, T., Bradley, J., Labunets, A., & Fett, D. (2020). “OAuth 2.0 Security Best Current Practice”. Internet Engineering Task Force (IETF) Draft. Retrieved from https://datatracker.ietf.org/doc/draft-ietf-oauth-security-topics/

[6] Google Identity Platform. (2021). “Migrate to PKCE for OAuth authorization code flow”. Google Developers Documentation. Retrieved from https://developers.google.com/identity/protocols/oauth2/native-app

[7] Parecki, A., & Waite, D. (2024). “The OAuth 2.1 Authorization Framework”. Internet Engineering Task Force (IETF) Draft, draft-ietf-oauth-v2-1-13. Retrieved from https://datatracker.ietf.org/doc/draft-ietf-oauth-v2-1/

[8] Li, W., Mitchell, C. J., & Chen, L. (2023). “Formal Security Analysis of OAuth 2.0 with PKCE”. IEEE Transactions on Dependable and Secure Computing, 20(4), 3142-3157. https://doi.org/10.1109/TDSC.2023.3234567

[9] Fett, D., Küsters, R., & Schmitz, G. (2022). “The Web Infrastructure Model: A Comprehensive Formal Model for the Analysis of the Web Infrastructure with Applications to OAuth 2.0 and OpenID Connect”. ACM Transactions on Privacy and Security, 25(2), Article 12. https://doi.org/10.1145/3512765

[10] Yang, R., Li, G., Lau, W. C., Zhang, K., & Hu, P. (2021). “Model-Based Security Testing: An Empirical Study on OAuth 2.0 Implementations”. Proceedings of the 2021 ACM Asia Conference on Computer and Communications Security (pp. 651-665). https://doi.org/10.1145/3433210.3453098

简图: